iskaggle = os.environ.get('KAGGLE_KERNEL_RUN_TYPE', '')

if iskaggle: path = Path('../input/titanic')

else:

path = Path('titanic')

if not path.exists():

import zipfile,kaggle

kaggle.api.competition_download_cli(str(path))

zipfile.ZipFile(f'{path}.zip').extractall(path)import torch, numpy as np, pandas as pd

np.set_printoptions(linewidth=140)

torch.set_printoptions(linewidth=140, sci_mode=False, edgeitems=7)

pd.set_option('display.width', 140)df = pd.read_csv(path/'train.csv')

df| PassengerId | Survived | Pclass | Name | Sex | Age | SibSp | Parch | Ticket | Fare | Cabin | Embarked | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1 | 0 | 3 | Braund, Mr. Owen Harris | male | 22.0 | 1 | 0 | A/5 21171 | 7.2500 | NaN | S |

| 1 | 2 | 1 | 1 | Cumings, Mrs. John Bradley (Florence Briggs Th... | female | 38.0 | 1 | 0 | PC 17599 | 71.2833 | C85 | C |

| 2 | 3 | 1 | 3 | Heikkinen, Miss. Laina | female | 26.0 | 0 | 0 | STON/O2. 3101282 | 7.9250 | NaN | S |

| 3 | 4 | 1 | 1 | Futrelle, Mrs. Jacques Heath (Lily May Peel) | female | 35.0 | 1 | 0 | 113803 | 53.1000 | C123 | S |

| 4 | 5 | 0 | 3 | Allen, Mr. William Henry | male | 35.0 | 0 | 0 | 373450 | 8.0500 | NaN | S |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 886 | 887 | 0 | 2 | Montvila, Rev. Juozas | male | 27.0 | 0 | 0 | 211536 | 13.0000 | NaN | S |

| 887 | 888 | 1 | 1 | Graham, Miss. Margaret Edith | female | 19.0 | 0 | 0 | 112053 | 30.0000 | B42 | S |

| 888 | 889 | 0 | 3 | Johnston, Miss. Catherine Helen "Carrie" | female | NaN | 1 | 2 | W./C. 6607 | 23.4500 | NaN | S |

| 889 | 890 | 1 | 1 | Behr, Mr. Karl Howell | male | 26.0 | 0 | 0 | 111369 | 30.0000 | C148 | C |

| 890 | 891 | 0 | 3 | Dooley, Mr. Patrick | male | 32.0 | 0 | 0 | 370376 | 7.7500 | NaN | Q |

891 rows × 12 columns

df.isna().sum()PassengerId 0

Survived 0

Pclass 0

Name 0

Sex 0

Age 177

SibSp 0

Parch 0

Ticket 0

Fare 0

Cabin 687

Embarked 2

dtype: int64modes = df.mode().iloc[0]df.fillna(modes, inplace=True)df.isna().sum()PassengerId 0

Survived 0

Pclass 0

Name 0

Sex 0

Age 0

SibSp 0

Parch 0

Ticket 0

Fare 0

Cabin 0

Embarked 0

dtype: int64import numpy as np

df.describe(include=(np.number))| PassengerId | Survived | Pclass | Age | SibSp | Parch | Fare | |

|---|---|---|---|---|---|---|---|

| count | 891.000000 | 891.000000 | 891.000000 | 891.000000 | 891.000000 | 891.000000 | 891.000000 |

| mean | 446.000000 | 0.383838 | 2.308642 | 28.566970 | 0.523008 | 0.381594 | 32.204208 |

| std | 257.353842 | 0.486592 | 0.836071 | 13.199572 | 1.102743 | 0.806057 | 49.693429 |

| min | 1.000000 | 0.000000 | 1.000000 | 0.420000 | 0.000000 | 0.000000 | 0.000000 |

| 25% | 223.500000 | 0.000000 | 2.000000 | 22.000000 | 0.000000 | 0.000000 | 7.910400 |

| 50% | 446.000000 | 0.000000 | 3.000000 | 24.000000 | 0.000000 | 0.000000 | 14.454200 |

| 75% | 668.500000 | 1.000000 | 3.000000 | 35.000000 | 1.000000 | 0.000000 | 31.000000 |

| max | 891.000000 | 1.000000 | 3.000000 | 80.000000 | 8.000000 | 6.000000 | 512.329200 |

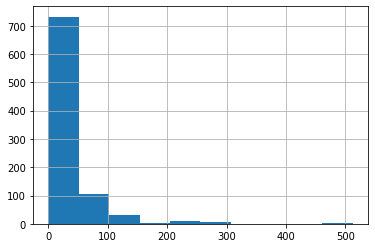

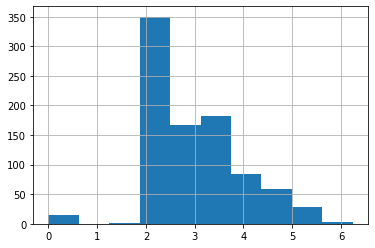

df['Fare'].hist();

df['LogFare'] = np.log1p(df['Fare'])df['LogFare'].hist();

pclasses = sorted(df.Pclass.unique())

pclasses[1, 2, 3]df = pd.get_dummies(df, columns=["Sex","Pclass","Embarked"])

df.columnsIndex(['PassengerId', 'Survived', 'Name', 'Age', 'SibSp', 'Parch', 'Ticket', 'Fare', 'Cabin', 'LogFare', 'Sex_female', 'Sex_male',

'Pclass_1', 'Pclass_2', 'Pclass_3', 'Embarked_C', 'Embarked_Q', 'Embarked_S'],

dtype='object')added_cols = ['Sex_male', 'Sex_female', 'Pclass_1', 'Pclass_2', 'Pclass_3', 'Embarked_C', 'Embarked_Q', 'Embarked_S']

df[added_cols].head()| Sex_male | Sex_female | Pclass_1 | Pclass_2 | Pclass_3 | Embarked_C | Embarked_Q | Embarked_S | |

|---|---|---|---|---|---|---|---|---|

| 0 | 1 | 0 | 0 | 0 | 1 | 0 | 0 | 1 |

| 1 | 0 | 1 | 1 | 0 | 0 | 1 | 0 | 0 |

| 2 | 0 | 1 | 0 | 0 | 1 | 0 | 0 | 1 |

| 3 | 0 | 1 | 1 | 0 | 0 | 0 | 0 | 1 |

| 4 | 1 | 0 | 0 | 0 | 1 | 0 | 0 | 1 |

df.Survived0 0

1 1

2 1

3 1

4 0

..

886 0

887 1

888 0

889 1

890 0

Name: Survived, Length: 891, dtype: int64from torch import tensor

t_dep = tensor(df.Survived)df| PassengerId | Survived | Name | Age | SibSp | Parch | Ticket | Fare | Cabin | LogFare | Sex_female | Sex_male | Pclass_1 | Pclass_2 | Pclass_3 | Embarked_C | Embarked_Q | Embarked_S | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1 | 0 | Braund, Mr. Owen Harris | 22.0 | 1 | 0 | A/5 21171 | 7.2500 | B96 B98 | 2.110213 | 0 | 1 | 0 | 0 | 1 | 0 | 0 | 1 |

| 1 | 2 | 1 | Cumings, Mrs. John Bradley (Florence Briggs Th... | 38.0 | 1 | 0 | PC 17599 | 71.2833 | C85 | 4.280593 | 1 | 0 | 1 | 0 | 0 | 1 | 0 | 0 |

| 2 | 3 | 1 | Heikkinen, Miss. Laina | 26.0 | 0 | 0 | STON/O2. 3101282 | 7.9250 | B96 B98 | 2.188856 | 1 | 0 | 0 | 0 | 1 | 0 | 0 | 1 |

| 3 | 4 | 1 | Futrelle, Mrs. Jacques Heath (Lily May Peel) | 35.0 | 1 | 0 | 113803 | 53.1000 | C123 | 3.990834 | 1 | 0 | 1 | 0 | 0 | 0 | 0 | 1 |

| 4 | 5 | 0 | Allen, Mr. William Henry | 35.0 | 0 | 0 | 373450 | 8.0500 | B96 B98 | 2.202765 | 0 | 1 | 0 | 0 | 1 | 0 | 0 | 1 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 886 | 887 | 0 | Montvila, Rev. Juozas | 27.0 | 0 | 0 | 211536 | 13.0000 | B96 B98 | 2.639057 | 0 | 1 | 0 | 1 | 0 | 0 | 0 | 1 |

| 887 | 888 | 1 | Graham, Miss. Margaret Edith | 19.0 | 0 | 0 | 112053 | 30.0000 | B42 | 3.433987 | 1 | 0 | 1 | 0 | 0 | 0 | 0 | 1 |

| 888 | 889 | 0 | Johnston, Miss. Catherine Helen "Carrie" | 24.0 | 1 | 2 | W./C. 6607 | 23.4500 | B96 B98 | 3.196630 | 1 | 0 | 0 | 0 | 1 | 0 | 0 | 1 |

| 889 | 890 | 1 | Behr, Mr. Karl Howell | 26.0 | 0 | 0 | 111369 | 30.0000 | C148 | 3.433987 | 0 | 1 | 1 | 0 | 0 | 1 | 0 | 0 |

| 890 | 891 | 0 | Dooley, Mr. Patrick | 32.0 | 0 | 0 | 370376 | 7.7500 | B96 B98 | 2.169054 | 0 | 1 | 0 | 0 | 1 | 0 | 1 | 0 |

891 rows × 18 columns

indep_cols = ['Age', 'SibSp', 'Parch', 'LogFare'] + added_cols

df[indep_cols].valuesarray([[22., 1., 0., ..., 0., 0., 1.],

[38., 1., 0., ..., 1., 0., 0.],

[26., 0., 0., ..., 0., 0., 1.],

...,

[24., 1., 2., ..., 0., 0., 1.],

[26., 0., 0., ..., 1., 0., 0.],

[32., 0., 0., ..., 0., 1., 0.]])t_indep = tensor(df[indep_cols].values, dtype=torch.float)

t_indeptensor([[22.0000, 1.0000, 0.0000, 2.1102, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000],

[38.0000, 1.0000, 0.0000, 4.2806, 0.0000, 1.0000, 1.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000],

[26.0000, 0.0000, 0.0000, 2.1889, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000],

[35.0000, 1.0000, 0.0000, 3.9908, 0.0000, 1.0000, 1.0000, 0.0000, 0.0000, 0.0000, 0.0000, 1.0000],

[35.0000, 0.0000, 0.0000, 2.2028, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000],

[24.0000, 0.0000, 0.0000, 2.2469, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000, 0.0000, 1.0000, 0.0000],

[54.0000, 0.0000, 0.0000, 3.9677, 1.0000, 0.0000, 1.0000, 0.0000, 0.0000, 0.0000, 0.0000, 1.0000],

...,

[25.0000, 0.0000, 0.0000, 2.0857, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000],

[39.0000, 0.0000, 5.0000, 3.4054, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000, 0.0000, 1.0000, 0.0000],

[27.0000, 0.0000, 0.0000, 2.6391, 1.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000],

[19.0000, 0.0000, 0.0000, 3.4340, 0.0000, 1.0000, 1.0000, 0.0000, 0.0000, 0.0000, 0.0000, 1.0000],

[24.0000, 1.0000, 2.0000, 3.1966, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000],

[26.0000, 0.0000, 0.0000, 3.4340, 1.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000],

[32.0000, 0.0000, 0.0000, 2.1691, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000, 0.0000, 1.0000, 0.0000]])t_indep.shapetorch.Size([891, 12])Linear model

torch.manual_seed(442)

n_coeff = t_indep.shape[1]

coeffs = torch.rand(n_coeff)-0.5

coeffstensor([-0.4629, 0.1386, 0.2409, -0.2262, -0.2632, -0.3147, 0.4876, 0.3136, 0.2799, -0.4392, 0.2103, 0.3625])vals,indices = t_indep.max(dim=0)

t_indep = t_indep / valsvalstensor([80.0000, 8.0000, 6.0000, 6.2409, 1.0000, 1.0000, 1.0000, 1.0000, 1.0000, 1.0000, 1.0000, 1.0000])indicestensor([630, 159, 678, 258, 0, 1, 1, 9, 0, 1, 5, 0])t_indep[0]tensor([0.2750, 0.1250, 0.0000, 0.3381, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000])t_indep*coeffstensor([[-0.1273, 0.0173, 0.0000, -0.0765, -0.2632, -0.0000, 0.0000, 0.0000, 0.2799, -0.0000, 0.0000, 0.3625],

[-0.2199, 0.0173, 0.0000, -0.1551, -0.0000, -0.3147, 0.4876, 0.0000, 0.0000, -0.4392, 0.0000, 0.0000],

[-0.1504, 0.0000, 0.0000, -0.0793, -0.0000, -0.3147, 0.0000, 0.0000, 0.2799, -0.0000, 0.0000, 0.3625],

[-0.2025, 0.0173, 0.0000, -0.1446, -0.0000, -0.3147, 0.4876, 0.0000, 0.0000, -0.0000, 0.0000, 0.3625],

[-0.2025, 0.0000, 0.0000, -0.0798, -0.2632, -0.0000, 0.0000, 0.0000, 0.2799, -0.0000, 0.0000, 0.3625],

[-0.1389, 0.0000, 0.0000, -0.0814, -0.2632, -0.0000, 0.0000, 0.0000, 0.2799, -0.0000, 0.2103, 0.0000],

[-0.3125, 0.0000, 0.0000, -0.1438, -0.2632, -0.0000, 0.4876, 0.0000, 0.0000, -0.0000, 0.0000, 0.3625],

...,

[-0.1447, 0.0000, 0.0000, -0.0756, -0.2632, -0.0000, 0.0000, 0.0000, 0.2799, -0.0000, 0.0000, 0.3625],

[-0.2257, 0.0000, 0.2008, -0.1234, -0.0000, -0.3147, 0.0000, 0.0000, 0.2799, -0.0000, 0.2103, 0.0000],

[-0.1562, 0.0000, 0.0000, -0.0956, -0.2632, -0.0000, 0.0000, 0.3136, 0.0000, -0.0000, 0.0000, 0.3625],

[-0.1099, 0.0000, 0.0000, -0.1244, -0.0000, -0.3147, 0.4876, 0.0000, 0.0000, -0.0000, 0.0000, 0.3625],

[-0.1389, 0.0173, 0.0803, -0.1158, -0.0000, -0.3147, 0.0000, 0.0000, 0.2799, -0.0000, 0.0000, 0.3625],

[-0.1504, 0.0000, 0.0000, -0.1244, -0.2632, -0.0000, 0.4876, 0.0000, 0.0000, -0.4392, 0.0000, 0.0000],

[-0.1852, 0.0000, 0.0000, -0.0786, -0.2632, -0.0000, 0.0000, 0.0000, 0.2799, -0.0000, 0.2103, 0.0000]])preds = (t_indep*coeffs).sum(axis=1)preds[:10]tensor([ 0.1927, -0.6239, 0.0979, 0.2056, 0.0968, 0.0066, 0.1306, 0.3476, 0.1613, -0.6285])loss = torch.abs(preds-t_dep).mean()

losstensor(0.5382)t_deptensor([0, 1, 1, 1, 0, 0, 0, 0, 1, 1, 1, 1, 0, 0, 0, 1, 0, 1, 0, 1, 0, 1, 1, 1, 0, 1, 0, 0, 1, 0, 0, 1, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1,

1, 0, 0, 1, 0, 0, 0, 0, 1, 1, 0, 1, 1, 0, 1, 0, 0, 1, 0, 0, 0, 1, 1, 0, 1, 0, 0, 0, 0, 0, 1, 0, 0, 0, 1, 1, 0, 1, 1, 0, 1, 1, 0, 0,

1, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 0, 0, 1, 1, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 1, 0, 1, 1, 0, 0, 0,

0, 1, 0, 0, 1, 0, 0, 0, 0, 1, 1, 0, 0, 0, 1, 0, 0, 0, 0, 1, 0, 0, 0, 0, 1, 0, 0, 0, 0, 1, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 1, 1, 0, 1, 1, 0, 0, 1, 0, 1, 1, 1, 1, 0, 0, 1, 0, 0, 0, 0, 0, 1, 0, 0, 1, 1, 1, 0, 1, 0, 0, 0, 1, 1, 0, 1, 0,

1, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 0, 1, 0, 0,

0, 0, 0, 1, 1, 1, 0, 1, 1, 0, 1, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 0, 1, 0, 1, 1, 1, 1, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 0, 1, 0, 1, 1, 1,

0, 1, 1, 1, 0, 0, 0, 1, 1, 0, 1, 1, 0, 0, 1, 1, 0, 1, 0, 1, 1, 1, 1, 0, 0, 0, 1, 0, 0, 1, 1, 0, 1, 1, 0, 0, 0, 1, 1, 1, 1, 0, 0, 0,

0, 0, 0, 0, 1, 0, 1, 1, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 0, 0, 0, 0, 1, 1, 0, 0, 0, 1, 1, 0, 1, 0, 0, 0, 1, 0, 1, 1, 1, 0, 1, 1, 0,

0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 1, 1, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 0, 1, 1, 1, 1, 0, 0, 1, 0, 1, 0, 0,

1, 0, 0, 1, 1, 1, 1, 1, 1, 1, 0, 0, 0, 1, 0, 1, 0, 1, 1, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 0, 0, 0, 1,

1, 0, 1, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 1, 0, 1, 1, 0, 1, 1, 0, 1, 1, 0, 0, 1, 0, 1, 0, 1, 0, 0, 1, 0, 0, 1, 0,

0, 0, 1, 0, 0, 1, 0, 1, 0, 1, 0, 1, 1, 0, 0, 1, 0, 0, 1, 1, 0, 1, 1, 0, 0, 1, 1, 0, 1, 0, 1, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1,

1, 1, 0, 0, 1, 1, 0, 1, 1, 1, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 0, 0, 0, 0, 1, 0, 0, 1, 1, 0, 0, 0, 1, 0, 0, 1, 1, 1, 0, 0, 1, 0, 0, 1,

0, 0, 1, 0, 0, 1, 1, 0, 0, 0, 0, 1, 0, 0, 1, 0, 1, 0, 0, 1, 0, 0, 0, 0, 0, 1, 0, 1, 1, 1, 0, 1, 0, 1, 0, 1, 0, 1, 0, 0, 0, 0, 0, 0,

1, 0, 0, 0, 1, 0, 0, 0, 0, 1, 1, 0, 0, 1, 0, 0, 0, 1, 0, 1, 0, 1, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 0, 0, 0, 0, 1, 0, 0, 1, 1, 0, 0,

0, 0, 1, 1, 1, 1, 1, 0, 1, 0, 0, 0, 1, 1, 0, 0, 1, 0, 0, 0, 1, 0, 1, 1, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 1, 0, 1, 0, 1, 0, 0, 1,

0, 0, 1, 1, 0, 0, 1, 1, 0, 0, 0, 1, 0, 0, 1, 1, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 1, 0, 1, 1, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0,

0, 0, 0, 0, 1, 1, 0, 0, 0, 1, 1, 1, 1, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 0, 1, 0, 0, 0, 1, 1, 1, 1, 1, 0, 0, 0, 1,

0, 0, 1, 1, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 1, 0, 1, 1, 1, 1, 0, 0, 0, 1, 0, 0, 1, 1, 0, 0, 1, 0, 1, 0, 0, 1, 1, 0, 0, 0, 1,

1, 0, 0, 0, 0, 0, 0, 1, 0, 1, 0])t_dep.shapetorch.Size([891])t_indep.shapetorch.Size([891, 12])def calc_preds(coeffs, indeps): return (indeps*coeffs).sum(axis=1)

def calc_loss(coeffs, indeps, deps): return torch.abs(calc_preds(coeffs, indeps)-deps).mean()Implementing the gradient descent

coeffs.requires_grad_()tensor([-0.4629, 0.1386, 0.2409, -0.2262, -0.2632, -0.3147, 0.4876, 0.3136, 0.2799, -0.4392, 0.2103, 0.3625], requires_grad=True)loss = calc_loss(coeffs, t_indep, t_dep)

losstensor(0.5382, grad_fn=<MeanBackward0>)loss.backward()coeffs.gradtensor([-0.0106, 0.0129, -0.0041, -0.0484, 0.2099, -0.2132, -0.1212, -0.0247, 0.1425, -0.1886, -0.0191, 0.2043])each time we call backward, the gradients are actually added to whatever is in the .grad attribute. If we run it again:

loss = calc_loss(coeffs, t_indep, t_dep)

loss.backward()

coeffs.gradtensor([-0.0212, 0.0258, -0.0082, -0.0969, 0.4198, -0.4265, -0.2424, -0.0494, 0.2851, -0.3771, -0.0382, 0.4085])our .grad values are have doubled. That’s because it added the gradients a second time. For this reason, after we use the gradients to do a gradient descent step, we need to set them back to zero.

We can now do one gradient descent step, and check that our loss decreases:

loss = calc_loss(coeffs, t_indep, t_dep)

loss.backward()

with torch.no_grad():

coeffs.sub_(coeffs.grad * 0.1)

coeffs.grad.zero_()

print(calc_loss(coeffs, t_indep, t_dep))tensor(0.4945)Training the linear model We could use ether fast.ai api or scikitlearns api to split the data set

!pip install fastbook

import fastbook

fastbook.setup_book()

from fastai.vision.all import *

from fastbook import *

import torch.nn.functional as FRequirement already satisfied: fastbook in /usr/local/lib/python3.9/dist-packages (0.0.28)

Requirement already satisfied: datasets in /usr/local/lib/python3.9/dist-packages (from fastbook) (2.3.2)

Requirement already satisfied: graphviz in /usr/local/lib/python3.9/dist-packages (from fastbook) (0.20.1)

Requirement already satisfied: requests in /usr/local/lib/python3.9/dist-packages (from fastbook) (2.28.1)

Requirement already satisfied: pandas in /usr/local/lib/python3.9/dist-packages (from fastbook) (1.4.3)

Requirement already satisfied: sentencepiece in /usr/local/lib/python3.9/dist-packages (from fastbook) (0.1.96)

Requirement already satisfied: pip in /usr/local/lib/python3.9/dist-packages (from fastbook) (22.2.2)

Requirement already satisfied: packaging in /usr/local/lib/python3.9/dist-packages (from fastbook) (21.3)

Requirement already satisfied: fastai>=2.6 in /usr/local/lib/python3.9/dist-packages (from fastbook) (2.7.9)

Requirement already satisfied: transformers in /usr/local/lib/python3.9/dist-packages (from fastbook) (4.20.1)

Requirement already satisfied: scikit-learn in /usr/local/lib/python3.9/dist-packages (from fastai>=2.6->fastbook) (1.1.1)

Requirement already satisfied: pillow>6.0.0 in /usr/local/lib/python3.9/dist-packages (from fastai>=2.6->fastbook) (9.2.0)

Requirement already satisfied: torchvision>=0.8.2 in /usr/local/lib/python3.9/dist-packages (from fastai>=2.6->fastbook) (0.13.0+cu116)

Requirement already satisfied: pyyaml in /usr/local/lib/python3.9/dist-packages (from fastai>=2.6->fastbook) (5.4.1)

Requirement already satisfied: spacy<4 in /usr/local/lib/python3.9/dist-packages (from fastai>=2.6->fastbook) (3.4.0)

Requirement already satisfied: fastcore<1.6,>=1.4.5 in /usr/local/lib/python3.9/dist-packages (from fastai>=2.6->fastbook) (1.5.27)

Requirement already satisfied: fastdownload<2,>=0.0.5 in /usr/local/lib/python3.9/dist-packages (from fastai>=2.6->fastbook) (0.0.7)

Requirement already satisfied: torch<1.14,>=1.7 in /usr/local/lib/python3.9/dist-packages (from fastai>=2.6->fastbook) (1.12.0+cu116)

Requirement already satisfied: fastprogress>=0.2.4 in /usr/local/lib/python3.9/dist-packages (from fastai>=2.6->fastbook) (1.0.3)

Requirement already satisfied: matplotlib in /usr/local/lib/python3.9/dist-packages (from fastai>=2.6->fastbook) (3.5.2)

Requirement already satisfied: scipy in /usr/local/lib/python3.9/dist-packages (from fastai>=2.6->fastbook) (1.8.1)

Requirement already satisfied: numpy>=1.17 in /usr/local/lib/python3.9/dist-packages (from datasets->fastbook) (1.23.1)

Requirement already satisfied: aiohttp in /usr/local/lib/python3.9/dist-packages (from datasets->fastbook) (3.8.1)

Requirement already satisfied: huggingface-hub<1.0.0,>=0.1.0 in /usr/local/lib/python3.9/dist-packages (from datasets->fastbook) (0.8.1)

Requirement already satisfied: xxhash in /usr/local/lib/python3.9/dist-packages (from datasets->fastbook) (3.0.0)

Requirement already satisfied: pyarrow>=6.0.0 in /usr/local/lib/python3.9/dist-packages (from datasets->fastbook) (8.0.0)

Requirement already satisfied: tqdm>=4.62.1 in /usr/local/lib/python3.9/dist-packages (from datasets->fastbook) (4.64.0)

Requirement already satisfied: dill<0.3.6 in /usr/local/lib/python3.9/dist-packages (from datasets->fastbook) (0.3.5.1)

Requirement already satisfied: responses<0.19 in /usr/local/lib/python3.9/dist-packages (from datasets->fastbook) (0.18.0)

Requirement already satisfied: multiprocess in /usr/local/lib/python3.9/dist-packages (from datasets->fastbook) (0.70.13)

Requirement already satisfied: fsspec[http]>=2021.05.0 in /usr/local/lib/python3.9/dist-packages (from datasets->fastbook) (2022.5.0)

Requirement already satisfied: idna<4,>=2.5 in /usr/lib/python3/dist-packages (from requests->fastbook) (2.8)

Requirement already satisfied: certifi>=2017.4.17 in /usr/lib/python3/dist-packages (from requests->fastbook) (2019.11.28)

Requirement already satisfied: charset-normalizer<3,>=2 in /usr/local/lib/python3.9/dist-packages (from requests->fastbook) (2.1.0)

Requirement already satisfied: urllib3<1.27,>=1.21.1 in /usr/local/lib/python3.9/dist-packages (from requests->fastbook) (1.26.10)

Requirement already satisfied: pyparsing!=3.0.5,>=2.0.2 in /usr/local/lib/python3.9/dist-packages (from packaging->fastbook) (3.0.9)

Requirement already satisfied: pytz>=2020.1 in /usr/local/lib/python3.9/dist-packages (from pandas->fastbook) (2022.1)

Requirement already satisfied: python-dateutil>=2.8.1 in /usr/local/lib/python3.9/dist-packages (from pandas->fastbook) (2.8.2)

Requirement already satisfied: tokenizers!=0.11.3,<0.13,>=0.11.1 in /usr/local/lib/python3.9/dist-packages (from transformers->fastbook) (0.12.1)

Requirement already satisfied: regex!=2019.12.17 in /usr/local/lib/python3.9/dist-packages (from transformers->fastbook) (2022.7.9)

Requirement already satisfied: filelock in /usr/local/lib/python3.9/dist-packages (from transformers->fastbook) (3.7.1)

Requirement already satisfied: typing-extensions>=3.7.4.3 in /usr/local/lib/python3.9/dist-packages (from huggingface-hub<1.0.0,>=0.1.0->datasets->fastbook) (4.3.0)

Requirement already satisfied: six>=1.5 in /usr/lib/python3/dist-packages (from python-dateutil>=2.8.1->pandas->fastbook) (1.14.0)

Requirement already satisfied: thinc<8.2.0,>=8.1.0 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (8.1.0)

Requirement already satisfied: preshed<3.1.0,>=3.0.2 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (3.0.6)

Requirement already satisfied: pathy>=0.3.5 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (0.6.2)

Requirement already satisfied: spacy-loggers<2.0.0,>=1.0.0 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (1.0.2)

Requirement already satisfied: catalogue<2.1.0,>=2.0.6 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (2.0.7)

Requirement already satisfied: spacy-legacy<3.1.0,>=3.0.9 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (3.0.9)

Requirement already satisfied: pydantic!=1.8,!=1.8.1,<1.10.0,>=1.7.4 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (1.9.1)

Requirement already satisfied: jinja2 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (3.1.2)

Requirement already satisfied: cymem<2.1.0,>=2.0.2 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (2.0.6)

Requirement already satisfied: wasabi<1.1.0,>=0.9.1 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (0.9.1)

Requirement already satisfied: typer<0.5.0,>=0.3.0 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (0.4.2)

Requirement already satisfied: setuptools in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (63.1.0)

Requirement already satisfied: langcodes<4.0.0,>=3.2.0 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (3.3.0)

Requirement already satisfied: srsly<3.0.0,>=2.4.3 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (2.4.3)

Requirement already satisfied: murmurhash<1.1.0,>=0.28.0 in /usr/local/lib/python3.9/dist-packages (from spacy<4->fastai>=2.6->fastbook) (1.0.7)

Requirement already satisfied: yarl<2.0,>=1.0 in /usr/local/lib/python3.9/dist-packages (from aiohttp->datasets->fastbook) (1.7.2)

Requirement already satisfied: multidict<7.0,>=4.5 in /usr/local/lib/python3.9/dist-packages (from aiohttp->datasets->fastbook) (6.0.2)

Requirement already satisfied: attrs>=17.3.0 in /usr/local/lib/python3.9/dist-packages (from aiohttp->datasets->fastbook) (18.2.0)

Requirement already satisfied: frozenlist>=1.1.1 in /usr/local/lib/python3.9/dist-packages (from aiohttp->datasets->fastbook) (1.3.0)

Requirement already satisfied: async-timeout<5.0,>=4.0.0a3 in /usr/local/lib/python3.9/dist-packages (from aiohttp->datasets->fastbook) (4.0.2)

Requirement already satisfied: aiosignal>=1.1.2 in /usr/local/lib/python3.9/dist-packages (from aiohttp->datasets->fastbook) (1.2.0)

Requirement already satisfied: cycler>=0.10 in /usr/local/lib/python3.9/dist-packages (from matplotlib->fastai>=2.6->fastbook) (0.11.0)

Requirement already satisfied: fonttools>=4.22.0 in /usr/local/lib/python3.9/dist-packages (from matplotlib->fastai>=2.6->fastbook) (4.34.4)

Requirement already satisfied: kiwisolver>=1.0.1 in /usr/local/lib/python3.9/dist-packages (from matplotlib->fastai>=2.6->fastbook) (1.4.3)

Requirement already satisfied: joblib>=1.0.0 in /usr/local/lib/python3.9/dist-packages (from scikit-learn->fastai>=2.6->fastbook) (1.1.0)

Requirement already satisfied: threadpoolctl>=2.0.0 in /usr/local/lib/python3.9/dist-packages (from scikit-learn->fastai>=2.6->fastbook) (3.1.0)

Requirement already satisfied: smart-open<6.0.0,>=5.2.1 in /usr/local/lib/python3.9/dist-packages (from pathy>=0.3.5->spacy<4->fastai>=2.6->fastbook) (5.2.1)

Requirement already satisfied: blis<0.8.0,>=0.7.8 in /usr/local/lib/python3.9/dist-packages (from thinc<8.2.0,>=8.1.0->spacy<4->fastai>=2.6->fastbook) (0.7.8)

Requirement already satisfied: click<9.0.0,>=7.1.1 in /usr/local/lib/python3.9/dist-packages (from typer<0.5.0,>=0.3.0->spacy<4->fastai>=2.6->fastbook) (8.1.3)

Requirement already satisfied: MarkupSafe>=2.0 in /usr/local/lib/python3.9/dist-packages (from jinja2->spacy<4->fastai>=2.6->fastbook) (2.1.1)

WARNING: Running pip as the 'root' user can result in broken permissions and conflicting behaviour with the system package manager. It is recommended to use a virtual environment instead: https://pip.pypa.io/warnings/venv

from fastai.data.transforms import RandomSplitter

trn_split,val_split=RandomSplitter(seed=42)(df)t_indeptensor([[0.2750, 0.1250, 0.0000, 0.3381, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000],

[0.4750, 0.1250, 0.0000, 0.6859, 0.0000, 1.0000, 1.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000],

[0.3250, 0.0000, 0.0000, 0.3507, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000],

[0.4375, 0.1250, 0.0000, 0.6395, 0.0000, 1.0000, 1.0000, 0.0000, 0.0000, 0.0000, 0.0000, 1.0000],

[0.4375, 0.0000, 0.0000, 0.3530, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000],

[0.3000, 0.0000, 0.0000, 0.3600, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000, 0.0000, 1.0000, 0.0000],

[0.6750, 0.0000, 0.0000, 0.6358, 1.0000, 0.0000, 1.0000, 0.0000, 0.0000, 0.0000, 0.0000, 1.0000],

...,

[0.3125, 0.0000, 0.0000, 0.3342, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000],

[0.4875, 0.0000, 0.8333, 0.5456, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000, 0.0000, 1.0000, 0.0000],

[0.3375, 0.0000, 0.0000, 0.4229, 1.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000],

[0.2375, 0.0000, 0.0000, 0.5502, 0.0000, 1.0000, 1.0000, 0.0000, 0.0000, 0.0000, 0.0000, 1.0000],

[0.3000, 0.1250, 0.3333, 0.5122, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000],

[0.3250, 0.0000, 0.0000, 0.5502, 1.0000, 0.0000, 1.0000, 0.0000, 0.0000, 1.0000, 0.0000, 0.0000],

[0.4000, 0.0000, 0.0000, 0.3476, 1.0000, 0.0000, 0.0000, 0.0000, 1.0000, 0.0000, 1.0000, 0.0000]])trn_split(#713) [788,525,821,253,374,98,215,313,281,305...]trn_indep,val_indep = t_indep[trn_split],t_indep[val_split]

trn_dep,val_dep = t_dep[trn_split],t_dep[val_split]

len(trn_indep),len(val_indep)(713, 178)len(trn_dep), len(val_dep)(713, 178)def update_coeffs(coeffs, lr):

coeffs.sub_(coeffs.grad * lr)

coeffs.grad.zero_()def one_epoch(coeffs, lr):

loss = calc_loss(coeffs, trn_indep, trn_dep)

loss.backward()

with torch.no_grad(): update_coeffs(coeffs, lr)

print(f"{loss:.3f}", end="; ")def init_coeffs(): return (torch.rand(n_coeff)-0.5).requires_grad_()def train_model(epochs=30, lr=0.01):

torch.manual_seed(442)

coeffs = init_coeffs()

for i in range(epochs): one_epoch(coeffs, lr=lr)

return coeffscoeffs = train_model(18, lr=0.2)0.536; 0.502; 0.477; 0.454; 0.431; 0.409; 0.388; 0.367; 0.349; 0.336; 0.330; 0.326; 0.329; 0.304; 0.314; 0.296; 0.300; 0.289; def show_coeffs(): return dict(zip(indep_cols, coeffs.requires_grad_(False)))

show_coeffs(){'Age': tensor(-0.2694),

'SibSp': tensor(0.0901),

'Parch': tensor(0.2359),

'LogFare': tensor(0.0280),

'Sex_male': tensor(-0.3990),

'Sex_female': tensor(0.2345),

'Pclass_1': tensor(0.7232),

'Pclass_2': tensor(0.4112),

'Pclass_3': tensor(0.3601),

'Embarked_C': tensor(0.0955),

'Embarked_Q': tensor(0.2395),

'Embarked_S': tensor(0.2122)}preds = calc_preds(coeffs, val_indep)We’ll assume that any passenger with a score of over 0.5 is predicted to survive. So that means we’re correct for each row where preds>0.5 is the same as the dependent variable:

results = val_dep.bool()==(preds>0.5)

results[:16]tensor([ True, True, True, True, True, True, True, True, True, True, False, False, False, True, True, False])results.float().mean()tensor(0.7865)val_dep.bool()tensor([ True, False, False, False, False, False, True, True, False, True, True, True, True, True, False, True, False, True, False, True, False, False, True, True, False, False, True,

False, False, True, True, False, False, False, True, False, False, True, False, False, True, False, False, True, False, False, True, False, False, True, False, False, False, False,

False, False, False, False, False, False, False, False, True, False, False, False, False, False, False, True, False, False, False, False, False, False, False, True, False, False, True,

True, False, False, True, False, False, True, True, False, False, False, True, False, True, False, False, True, True, False, False, True, False, False, True, True, True, True,

True, False, True, False, True, True, False, True, False, False, False, True, False, True, False, True, False, False, False, True, False, False, True, False, True, True, True,

True, False, False, False, True, False, True, False, False, False, True, False, True, True, False, False, False, True, False, True, False, False, False, True, False, True, False,

False, True, True, True, True, True, False, False, False, False, True, False, True, True, True, True])val_deptensor([1, 0, 0, 0, 0, 0, 1, 1, 0, 1, 1, 1, 1, 1, 0, 1, 0, 1, 0, 1, 0, 0, 1, 1, 0, 0, 1, 0, 0, 1, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 0,

0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 1, 1, 0, 0, 1, 0, 0, 1, 1, 0, 0, 0, 1, 0, 1, 0, 0, 1, 1, 0, 0, 1, 0, 0, 1, 1, 1, 1, 1, 0, 1, 0, 1, 1, 0, 1, 0, 0, 0, 1, 0, 1, 0, 1, 0, 0, 0, 1,

0, 0, 1, 0, 1, 1, 1, 1, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 0, 1, 1, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 0, 1, 0, 0, 1, 1, 1, 1, 1, 0, 0, 0, 0, 1, 0, 1, 1, 1, 1])def acc(coeffs): return (val_dep.bool()==(calc_preds(coeffs, val_indep)>0.5)).float().mean()

acc(coeffs)tensor(0.7865)Using sigmoid to keep preds between 0 and 1

def calc_preds(coeffs, indeps): return torch.sigmoid((indeps*coeffs).sum(axis=1))coeffs = train_model(lr=100)0.510; 0.327; 0.294; 0.207; 0.201; 0.199; 0.198; 0.197; 0.196; 0.196; 0.196; 0.195; 0.195; 0.195; 0.195; 0.195; 0.195; 0.195; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; check the accuracy

acc(coeffs)tensor(0.8258)show_coeffs(){'Age': tensor(-1.5061),

'SibSp': tensor(-1.1575),

'Parch': tensor(-0.4267),

'LogFare': tensor(0.2543),

'Sex_male': tensor(-10.3320),

'Sex_female': tensor(8.4185),

'Pclass_1': tensor(3.8389),

'Pclass_2': tensor(2.1398),

'Pclass_3': tensor(-6.2331),

'Embarked_C': tensor(1.4771),

'Embarked_Q': tensor(2.1168),

'Embarked_S': tensor(-4.7958)}Putting it all together towards a submission to kaggle

tst_df = pd.read_csv(path/'test.csv')

tst_df['Fare'] = tst_df.Fare.fillna(0)

tst_df.fillna(modes, inplace=True)

tst_df['LogFare'] = np.log(tst_df['Fare']+1)

tst_df = pd.get_dummies(tst_df, columns=["Sex","Pclass","Embarked"])

tst_indep = tensor(tst_df[indep_cols].values, dtype=torch.float)

tst_indep = tst_indep / valstst_df['Survived'] = (calc_preds(tst_indep, coeffs)>0.5).int()sub_df = tst_df[['PassengerId','Survived']]

sub_df.to_csv('sub.csv', index=False)Using matrix product

val_indep@coeffstensor([ 12.3288, -14.8119, -15.4540, -13.1513, -13.3511, -13.6468, 3.6248, 5.3429, -22.0878, 3.1233, -21.8742, -15.6421, -21.5504, 3.9393, -21.9190, -12.0010, -12.3775, 5.3550, -13.5880,

-3.1015, -21.7237, -12.2081, 12.9767, 4.7427, -21.6525, -14.9135, -2.7433, -12.3210, -21.5886, 3.9387, 5.3890, -3.6196, -21.6296, -21.8454, 12.2159, -3.2275, -12.0289, 13.4560,

-21.7230, -3.1366, -13.2462, -21.7230, -13.6831, 13.3092, -21.6477, -3.5868, -21.6854, -21.8316, -14.8158, -2.9386, -5.3103, -22.2384, -22.1097, -21.7466, -13.3780, -13.4909, -14.8119,

-22.0690, -21.6666, -21.7818, -5.4439, -21.7407, -12.6551, -21.6671, 4.9238, -11.5777, -13.3323, -21.9638, -15.3030, 5.0243, -21.7614, 3.1820, -13.4721, -21.7170, -11.6066, -21.5737,

-21.7230, -11.9652, -13.2382, -13.7599, -13.2170, 13.1347, -21.7049, -21.7268, 4.9207, -7.3198, -5.3081, 7.1065, 11.4948, -13.3135, -21.8723, -21.7230, 13.3603, -15.5670, 3.4105,

-7.2857, -13.7197, 3.6909, 3.9763, -14.7227, -21.8268, 3.9387, -21.8743, -21.8367, -11.8518, -13.6712, -21.8299, 4.9440, -5.4471, -21.9666, 5.1333, -3.2187, -11.6008, 13.7920,

-21.7230, 12.6369, -3.7268, -14.8119, -22.0637, 12.9468, -22.1610, -6.1827, -14.8119, -3.2838, -15.4540, -11.6950, -2.9926, -3.0110, -21.5664, -13.8268, 7.3426, -21.8418, 5.0744,

5.2582, 13.3415, -21.6289, -13.9898, -21.8112, -7.3316, 5.2296, -13.4453, 12.7891, -22.1235, -14.9625, -3.4339, 6.3089, -21.9839, 3.1968, 7.2400, 2.8558, -3.1187, 3.7965,

5.4667, -15.1101, -15.0597, -22.9391, -21.7230, -3.0346, -13.5206, -21.7011, 13.4425, -7.2690, -21.8335, -12.0582, 13.0489, 6.7993, 5.2160, 5.0794, -12.6957, -12.1838, -3.0873,

-21.6070, 7.0744, -21.7170, -22.1001, 6.8159, -11.6002, -21.6310])def calc_preds(coeffs, indeps): return torch.sigmoid(indeps@coeffs)def init_coeffs(): return (torch.rand(n_coeff, 1)*0.1).requires_grad_()trn_deptensor([1, 0, 1, 0, 0, 1, 1, 0, 0, 1, 1, 0, 0, 0, 1, 1, 1, 0, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 0, 1, 1, 0, 1, 1, 0, 0, 0, 1, 0, 0, 1, 1, 0, 0, 1, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 0,

0, 1, 1, 1, 1, 1, 1, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 1, 0, 1, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 0, 0, 1, 1, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 1, 0, 1, 1, 0, 1, 0, 0, 1, 0, 1, 0, 1, 1, 0, 0, 1,

0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 1, 0, 0, 1, 1, 0, 1, 1, 0, 0, 1, 0, 0, 1, 1, 0, 0, 1, 0, 0, 0, 1, 0, 1, 0, 0, 0, 1, 1, 0, 0, 0, 1, 0, 0, 1, 0, 1, 1, 1, 0, 0, 0, 0, 1, 1, 1, 1, 0, 1, 1, 0, 0, 0,

1, 1, 0, 0, 0, 0, 1, 1, 1, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 1, 1, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 0, 1, 0, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 1, 1, 1, 0,

1, 1, 1, 0, 0, 1, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 1, 0, 0, 1, 1, 1, 1, 0, 0, 1, 1, 1, 0, 1, 0, 1, 1, 0, 0, 0, 1, 1, 1, 0, 0, 1, 0, 1, 1, 1, 0, 1, 1, 0, 1, 0, 0, 0, 1, 1, 1, 0, 0, 0, 0, 1,

0, 0, 0, 0, 0, 1, 1, 1, 0, 1, 0, 0, 1, 1, 0, 1, 1, 0, 1, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 1, 1, 0, 0, 1, 0, 1, 0, 1, 0, 0, 1, 1, 1, 0, 0, 1, 0, 0, 0, 1,

0, 1, 0, 0, 1, 0, 1, 0, 1, 0, 0, 1, 0, 1, 1, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 0, 1, 0, 1, 0, 1, 0, 0, 1, 1, 0, 0, 0, 1, 0, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 1, 0, 0, 1, 0,

1, 0, 1, 1, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 0, 1, 0, 1, 0, 0, 1, 1, 1, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 1, 1, 1, 0, 0, 0, 1, 0, 1, 1, 1, 1, 1, 0, 0, 1, 1, 0, 0, 1, 0, 0, 1,

0, 1, 0, 1, 0, 0, 1, 1, 1, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 1, 1, 1, 0, 0, 0, 1, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 1, 0, 1, 1, 0, 0, 0, 0,

1, 0, 1, 1, 0, 0, 0, 0, 0, 1, 0, 0, 0, 1, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1, 1, 0, 1, 0, 0, 0, 1, 0, 0, 1, 0, 1, 1, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 1, 0, 1, 0,

0, 1, 0, 0, 1, 0, 0, 0, 1, 1, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 1, 0, 1, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 1, 0, 1, 0, 0, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 1, 1, 1, 0, 0, 0, 0, 1, 0, 0, 0,

1, 1, 0, 0, 0, 0, 1, 0, 0])trn_dep = trn_dep[:,None]

val_dep = val_dep[:,None]trn_dep.shapetorch.Size([713, 1])coeffs = train_model(lr=100)0.512; 0.323; 0.290; 0.205; 0.200; 0.198; 0.197; 0.197; 0.196; 0.196; 0.196; 0.195; 0.195; 0.195; 0.195; 0.195; 0.195; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; 0.194; acc(coeffs)tensor(0.8258)Neural Network implementation

def init_coeffs(n_hidden=20):

layer1 = (torch.rand(n_coeff, n_hidden)-0.5)/n_hidden

layer2 = torch.rand(n_hidden, 1)-0.3

const = torch.rand(1)[0]

return layer1.requires_grad_(),layer2.requires_grad_(),const.requires_grad_()import torch.nn.functional as F

def calc_preds(coeffs, indeps):

l1,l2,const = coeffs

res = F.relu(indeps@l1)

res = res@l2 + const

return torch.sigmoid(res)def update_coeffs(coeffs, lr):

for layer in coeffs:

layer.sub_(layer.grad * lr)

layer.grad.zero_()coeffs = train_model(lr=1.4)0.543; 0.532; 0.520; 0.505; 0.487; 0.466; 0.439; 0.407; 0.373; 0.343; 0.319; 0.301; 0.286; 0.274; 0.264; 0.256; 0.250; 0.245; 0.240; 0.237; 0.234; 0.231; 0.229; 0.227; 0.226; 0.224; 0.223; 0.222; 0.221; 0.220; coeffs = train_model(lr=20)0.543; 0.400; 0.260; 0.390; 0.221; 0.211; 0.197; 0.195; 0.193; 0.193; 0.193; 0.193; 0.193; 0.193; 0.193; 0.193; 0.193; 0.192; 0.192; 0.192; 0.192; 0.192; 0.192; 0.192; 0.192; 0.192; 0.192; 0.192; 0.192; 0.192; acc(coeffs)tensor(0.8258)Deep learning implementation

def init_coeffs():

hiddens = [10, 10] # <-- set this to the size of each hidden layer you want

sizes = [n_coeff] + hiddens + [1]

n = len(sizes)

layers = [(torch.rand(sizes[i], sizes[i+1])-0.3)/sizes[i+1]*4 for i in range(n-1)]

consts = [(torch.rand(1)[0]-0.5)*0.1 for i in range(n-1)]

for l in layers+consts: l.requires_grad_()

return layers,constsimport torch.nn.functional as F

def calc_preds(coeffs, indeps):

layers,consts = coeffs

n = len(layers)

res = indeps

for i,l in enumerate(layers):

res = res@l + consts[i]

if i!=n-1: res = F.relu(res) #activation fct

return torch.sigmoid(res) #activation fctdef update_coeffs(coeffs, lr):

layers,consts = coeffs

for layer in layers+consts:

layer.sub_(layer.grad * lr)

layer.grad.zero_()coeffs = train_model(lr=4)0.521; 0.483; 0.427; 0.379; 0.379; 0.379; 0.379; 0.378; 0.378; 0.378; 0.378; 0.378; 0.378; 0.378; 0.378; 0.378; 0.377; 0.376; 0.371; 0.333; 0.239; 0.224; 0.208; 0.204; 0.203; 0.203; 0.207; 0.197; 0.196; 0.195; acc(coeffs)tensor(0.8258)